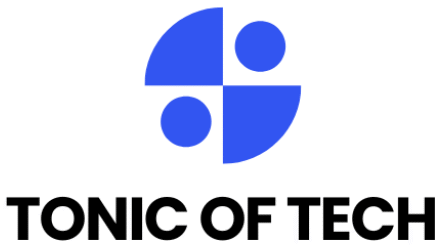

AGI vs AI: What’s the Real Difference?

The terms agi vs ai are often used interchangeably, but they mean very different things. This guide explains the agi vs ai difference in plain language, shows real examples, and outlines timelines and risks so you can understand what matters now versus what may come later.

What is AI?

Artificial intelligence (AI) refers to algorithms and systems that perform tasks that would normally require human intelligence. Today’s most common AI systems are narrow AI: they classify images, translate text, power search, or generate content. These models are trained on data for specific jobs and don’t transfer their skills easily to unrelated tasks.

Modern AI tools power search engines, medical imaging, and virtual assistants. According to industry surveys, over half of organizations now use at least one AI capability in business processes, showing how widely narrow AI is already adopted

What is AGI?

Artificial General Intelligence (AGI) means a system that can perform any intellectual task a human can and learn new tasks without task-specific retraining. AGI implies flexible reasoning, broad commonsense understanding, and transfer learning across very different domains.

AGI is still hypothetical. Researchers debate whether AGI will emerge from scaling current models, entirely new architectures, or hybrid human-AI systems. The difference between agi vs ai is therefore not only technical but philosophical and practical.

AGI vs AI difference: key comparisons

Scope and capability

- AI (narrow): Built for one domain image tagging, spam filtering, or speech recognition. It excels within a defined scope.

- AGI: Would handle many types of tasks, from writing code to planning complex projects and learning new skills independently.

Learning and generalization

- Narrow AI often requires large labeled datasets and fine-tuning for each task.

- AGI would demonstrate human-like generalization: learning new tasks from few examples and transferring knowledge across domains.

Goal orientation and reasoning

- AI systems follow optimized objectives and reward functions set by engineers. They can be brittle if objectives misalign with real intent.

- AGI would need sophisticated goal structures and self-directed reasoning, raising complex alignment and safety questions.

Safety and governance

- With narrow AI, safety focuses on bias, privacy, and robustness.

- For AGI, safety includes control, value alignment, and long-term existential risks. Governance for AGI would need coordinated international frameworks, unlike most current AI regulation which targets specific applications.

Also Read: Edge AI: How Your Phone Is Getting Smarter Without the Cloud

How close are we to AGI?

Predictions vary widely. Some researchers argue that scaling current models will eventually produce AGI; others say new conceptual breakthroughs are needed. There is no consensus timeline estimates range from years to decades. What is clear: progress in model scale, compute, and data has accelerated capabilities, but human-level general intelligence remains unproven.

Research institutions (OpenAI, DeepMind) and academic surveys regularly debate timelines and safety strategies. See OpenAI and DeepMind blogs for their current perspectives

What AGI would mean for society

AGI could transform labor, creativity, science, and defense. Benefits might include radically faster scientific discovery, personalized education, and new economic productivity. Risks include job disruption, concentration of power, misuse, and existential concerns if alignment fails.

Policy makers and technologists stress preparedness: invest in robust safety research, equitable access, and legal frameworks. The Stanford AI Index and industry reports track capability growth and societal impact trends

How to think about agi vs ai as a user or leader

- For users: Focus on narrow AI benefits today productivity, automation, and tools while staying informed about long-term AGI debates.

- For leaders: Invest in responsible AI practices: data governance, fairness, and security. Prepare contingency planning for advanced capabilities and support public dialogue on AGI governance.

Practical steps: adopt clear AI policies, fund safety research, and build human-in-the-loop systems that keep people in control.

Also Read: 10 Best AI Productivity Tools to Supercharge Your Work

Conclusion

The debate of agi vs ai comes down to scope and potential impact. Narrow AI already reshapes industries; AGI remains a powerful but uncertain future possibility. Learn the difference, prioritize safe deployment today, and follow credible research as capabilities evolve.

FAQs

1. What is the main agi vs ai difference?

The main difference is scope: AI (narrow AI) solves specific tasks using specialized models, while AGI would perform any intellectual task humans can do and transfer learning across domains. AGI implies flexible general reasoning; narrow AI does not.

2. Is AGI just a bigger AI model?

Not necessarily. Some believe scaling models could lead to AGI, but others argue that new architectures, reasoning abilities, or cognitive frameworks are needed. The timeline and path to AGI remain uncertain.

3. Will AGI replace jobs more than current AI?

AGI could have broader effects than narrow AI because of its general capabilities. Narrow AI already automates many tasks; AGI could automate higher-level cognitive work. Planning and social policy are essential to manage transitions.

4. Are current AI safety practices enough for AGI?

Current practices (bias audits, robustness testing) help for narrow AI, but AGI requires deeper safety research: alignment, control, and international governance. Experts urge investment in long-term safety now.

5. Where can I read more about AGI research and perspectives?

Authoritative places include research blogs and reports from OpenAI (https://openai.com/blog/), DeepMind (https://deepmind.com/blog), and the Stanford AI Index. For industry trends, see McKinsey’s AI overview